42 soft labels machine learning

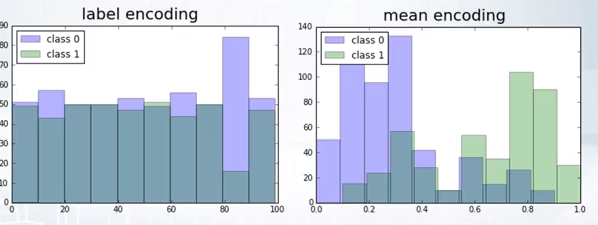

ARIMA for Classification with Soft Labels | by Marco ... Adopt a regression approach to model a binary target is not a great choice. Firstly, misclassifications aren’t punished enough. The decision boundary in a classification task is large while, in regression, the distance between two predicted values can be small. Secondly, from a probabilistic point of view, modeling with regression we are making som... What is data labeling? - aws.amazon.com In machine learning, data labeling is the process of identifying raw data (images, text files, videos, etc.) and adding one or more meaningful and informative labels to provide context so that a machine learning model can learn from it. For example, labels might indicate whether a photo contains a bird or car, which words were uttered in an ...

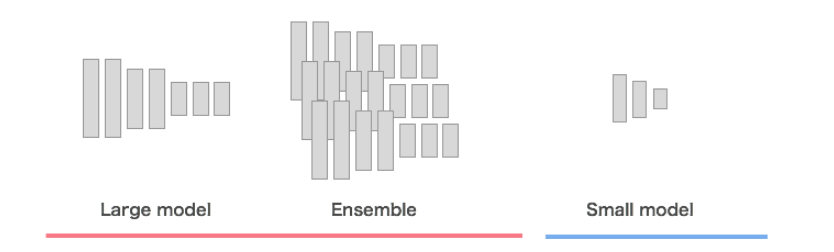

Knowledge distillation in deep learning and its applications Soft labels refers to the output of the teacher model. In case of classification tasks, the soft labels represent the probability distribution among the classes for an input sample. The second category, on the other hand, considers works that distill knowledge from other parts of the teacher model, optionally including the soft labels.

Soft labels machine learning

[2009.09496] Learning Soft Labels via Meta Learning - arXiv Learning Soft Labels via Meta Learning Nidhi Vyas, Shreyas Saxena, Thomas Voice One-hot labels do not represent soft decision boundaries among concepts, and hence, models trained on them are prone to overfitting. Using soft labels as targets provide regularization, but different soft labels might be optimal at different stages of optimization. What is Data Labeling? | IBM What is data labeling? Data labeling, or data annotation, is part of the preprocessing stage when developing a machine learning (ML) model. It requires the identification of raw data (i.e., images, text files, videos), and then the addition of one or more labels to that data to specify its context for the models, allowing the machine learning model to make accurate predictions. Learning classification models with soft-label information Objective: Learning of classification models in medicine often relies on data labeled by a human expert. Since labeling of clinical data may be time-consuming, finding ways of alleviating the labeling costs is critical for our ability to automatically learn such models. In this paper we propose a new machine learning approach that is able to learn improved binary classification models more efficiently by refining the binary class information in the training phase with soft labels that ...

Soft labels machine learning. Efficient Learning of Classification Models from Soft ... soft-label further refining its class label. One caveat of apply- ing this idea is that soft-labels based on human assessment are often noisy. To address this problem, we develop and test a new classification model learning algorithm that relies on soft-label binning to limit the effect of soft-label noise. We Labeling images and text documents - Azure Machine Learning No machine learning model has 100% accuracy. While we only use data for which the model is confident, these data might still be incorrectly prelabeled. When you see labels, correct any wrong labels before submitting the page. Especially early in a labeling project, the machine learning model may only be accurate enough to prelabel a small ... Understanding Deep Learning on Controlled Noisy Labels In "Beyond Synthetic Noise: Deep Learning on Controlled Noisy Labels", published at ICML 2020, we make three contributions towards better understanding deep learning on non-synthetic noisy labels. First, we establish the first controlled dataset and benchmark of realistic, real-world label noise sourced from the web (i.e., web label noise ... Learning Soft Labels via Meta Learning - Apple Machine ... Learning Soft Labels via Meta Learning. View publication. Copy Bibtex. One-hot labels do not represent soft decision boundaries among concepts, and hence, models trained on them are prone to overfitting. Using soft labels as targets provide regularization, but different soft labels might be optimal at different stages of optimization. Also, training with fixed labels in the presence of noisy annotations leads to worse generalization.

What is the difference between soft and hard labels? - reddit Soft Label = probability encoded e.g. [0.1, 0.3, 0.5, 0.2] Soft labels have the potential to tell a model more about the meaning of each sample. 6 More posts from the learnmachinelearning community 734 Posted by 5 days ago 2 Project Started learning ML 2 years, now using GPT-3 to automate CV personalisation for job applications! What is the definition of "soft label" and "hard label"? Aug 03, 2021 · A soft label is one which has a score (probability or likelihood) attached to it. So the element is a member of the class in question with probability/likelihood score of eg 0.7; this implies that an element can be a member of multiple classes (presumably with different membership scores), which is usually not possible with hard labels. How to Organize Data Labeling for Machine Learning ... You will need to collect and label at least 90,000 reviews to build a model that performs adequately. Assuming that labeling a single comment may take a worker 30 seconds, he or she will need to spend 750 hours or almost 94 work shifts averaging 8 hours each to complete the task. And that's another way of saying three months. Label smoothing with Keras, TensorFlow, and Deep Learning This type of label assignment is called soft label assignment. Unlike hard label assignments where class labels are binary (i.e., positive for one class and a negative example for all other classes), soft label assignment allows: The positive class to have the largest probability While all other classes have a very small probability

Label Smoothing: An ingredient of higher model accuracy These are soft labels, instead of hard labels, that is 0 and 1. This will ultimately give you lower loss when there is an incorrect prediction, and subsequently, your model will penalize and learn incorrectly by a slightly lesser degree. What Is Data Labeling in Machine Learning? - Label Your Data Nov 10, 2020 · In machine learning, a label is added by human annotators to explain a piece of data to the computer. This process is known as data annotation and is necessary to show the human understanding of the real world to the machines. Data labeling tools and providers of annotation services are an integral part of a modern AI project. Classification on Soft Labels is Robust Against Label Noise by C Thiel · Cited by 46 — on soft labels are more resilient to label noise than those trained on hard labels. 1 Introduction ... Machine Learning, Morgan Kaufmann (2001) 306–313. Pseudo Labelling - A Guide To Semi-Supervised Learning There are 3 kinds of machine learning approaches- Supervised, Unsupervised, and Reinforcement Learning techniques. Supervised learning as we know is where data and labels are present. Unsupervised Learning is where only data and no labels are present. Reinforcement learning is where the agents learn from the actions taken to generate rewards.

machine learning - What is the difference between a ... I'm following a tutorial about machine learning basics and there is mentioned that something can be a feature or a label. From what I know, a feature is a property of data that is being used. I can't figure out what the label is, I know the meaning of the word, but I want to know what it means in the context of machine learning.

A semi-supervised learning approach for soft labeled data Soft labels indicate the degree of membership of the training data to the given classes. Often only a small number of labeled data is available while unlabeled ...

Is it okay to use cross entropy loss function with soft ... In the case of 'soft' labels like you mention, the labels are no longer class identities themselves, but probabilities over two possible classes. Because of this, you can't use the standard expression for the log loss. But, the concept of cross entropy still applies. In fact, it seems even more natural in this case.

Semi-Supervised Learning With Label Propagation Nodes in the graph then have label soft labels or label distribution based on the labels or label distributions of examples connected nearby in the graph. Many semi-supervised learning algorithms rely on the geometry of the data induced by both labeled and unlabeled examples to improve on supervised methods that use only the labeled data.

How to Label Data for Machine Learning: Process and Tools ... Data labeling (or data annotation) is the process of adding target attributes to training data and labeling them so that a machine learning model can learn what predictions it is expected to make. This process is one of the stages in preparing data for supervised machine learning.

The Ultimate Guide to Data Labeling for Machine Learning In machine learning, if you have labeled data, that means your data is marked up, or annotated, to show the target, which is the answer you want your machine learning model to predict. In general, data labeling can refer to tasks that include data tagging, annotation, classification, moderation, transcription, or processing.

How to Label Image Data for Machine Learning and Deep ... Anolytics can label all types of images for machine learning and deep learning algorithm training. It is annotating images using the various techniques like bounding box, semantic segmentation, polygon annotation, polyline annotation and landmarking annotation or cuboid annotation to make the object of interest easily recognizable to machines ...

How to Label Data for Machine Learning in Python - ActiveState Data labeling in Machine Learning (ML) is the process of assigning labels to subsets of data based on its characteristics. Data labeling takes unlabeled datasets and augments each piece of data with informative labels or tags. Most commonly, data is annotated with a text label.

Features and labels - Module 4: Building and ... - Coursera Module 4: Building and evaluating ML models. After you have assessed the feasibility of your supervised ML problem, you're ready to move to the next phase of an ML project. This module explores the various considerations and requirements for building a complete dataset in preparation for training, evaluating, and deploying an ML model.

Soft Label In Machine Learning - 02/2021 - Course f soft label in machine learning provides a comprehensive and comprehensive pathway for students to see progress after the end of each module. With a team of extremely dedicated and quality lecturers, soft label in machine learning will not only be a place to share knowledge but also to help students get inspired to explore and discover many ...

Python Programming Tutorials How does the actual machine learning thing work? With supervised learning, you have features and labels. The features are the descriptive attributes, and the label is what you're attempting to predict or forecast. Another common example with regression might be to try to predict the dollar value of an insurance policy premium for someone.

35 A Label Always Turns Into An Instruction That Executes In The Generated Machine Code - Labels ...

One Line To Rule Them All: Generating LO-Shot Soft-Label ... by I Sucholutsky · 2021 · Cited by 1 — Abstract: Increasingly large datasets are rapidly driving up the computational costs of machine learning. Prototype generation methods aim ...

PDF Efficient Learning with Soft Label Information and ... Note that our learning from auxiliary soft labels approach is complementary to active learning: while the later aims to select the most informative examples, we aim to gain more useful information from those selected. This gives us an opportunity to combine these two 3 approaches. 1.2 LEARNING WITH MULTIPLE ANNOTATORS

scikit-learn classification on soft labels - Stack Overflow Cross-entropy loss function can handle soft labels in target naturally. It seems that all loss functions for linear classifiers in scikit-learn can only handle hard labels. So the question is probably: How to specify my own loss function for SGDClassifier, for example.

Learning classification models with soft-label information Objective: Learning of classification models in medicine often relies on data labeled by a human expert. Since labeling of clinical data may be time-consuming, finding ways of alleviating the labeling costs is critical for our ability to automatically learn such models. In this paper we propose a new machine learning approach that is able to learn improved binary classification models more efficiently by refining the binary class information in the training phase with soft labels that ...

What is Data Labeling? | IBM What is data labeling? Data labeling, or data annotation, is part of the preprocessing stage when developing a machine learning (ML) model. It requires the identification of raw data (i.e., images, text files, videos), and then the addition of one or more labels to that data to specify its context for the models, allowing the machine learning model to make accurate predictions.

[2009.09496] Learning Soft Labels via Meta Learning - arXiv Learning Soft Labels via Meta Learning Nidhi Vyas, Shreyas Saxena, Thomas Voice One-hot labels do not represent soft decision boundaries among concepts, and hence, models trained on them are prone to overfitting. Using soft labels as targets provide regularization, but different soft labels might be optimal at different stages of optimization.

![Reflections Of The Void: [Links of the Day] 25/02/2020 : Tensor flow deployment, Framework for ...](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgg7TN21qYDKYrg5QNjwOFGQ21bd3iwNKsHz7ItuX2BVlf9-g2eoFAwm_IOt_JhOwW_On3RRkFLDfLMxQyoi_pJI0vDmvAjvkqYnMwlTqnK1FHZJHlCLDCdypYG-3AiZI1CI1t-T8VGxCzG/s1600/1_zNXeQ6mD5z5VD8coStye0w.gif)

Post a Comment for "42 soft labels machine learning"